HF supports Tensorflow 2.0 (keras) based model and training abstractions which is something I am familiar with.E.g., their fast tokenizer, model loading and saving scripts work really well. HF is supports a broad set of pretrained models and lots of well designed tools and methods.However, there are several reasosn why HuggingFace and Tensorflow were a good fit for my project: In theory you could use other pretrained models (e.g., the excellent models from TF Hub) or frameworks, or AutoML tools (e.g., GCP Vertex AI automl for text).

#Finetune bert how to

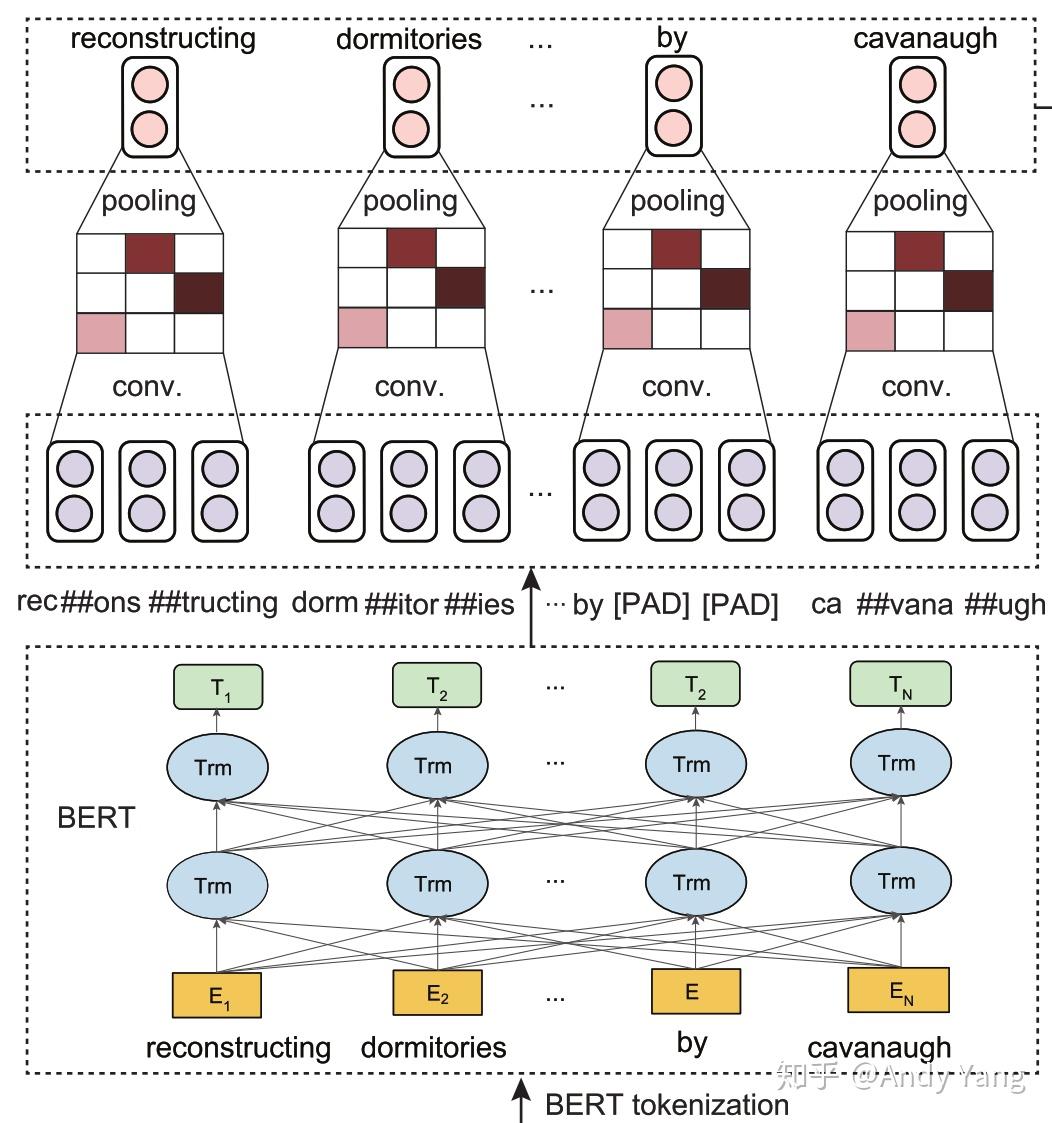

e.g., a split containing a single label). Shuffle and chunk large datasets smaller splits (bad things happen if you forget to shuffle.I used the huggingface transformers library, using the Tensorflow 2.0 Keras based models. The goal was to train the model on a relatively large dataset (~7 million rows), use the resulting model to annotate a dataset of 9 million tweets, all of this being done on moderate sized compute (single P100 gpu). I recently ran some experiments to train a model (more like fine tune a pretrained model) to classify tweets as containing politics related content or not. The libary began with a Pytorch focus but has now evolved to support both Tensorflow and JAX! The huggingface transformers library makes it really easy to work with all things nlp, with text classification being perhaps the most common task.